After two years of development and some deliberation, AMD decided that there is no business case for running CUDA applications on AMD GPUs. One of the terms of my contract with AMD was that if AMD did not find it fit for further development, I could release it. Which brings us to today.

So AMD already gave up on this, and if they hadn’t they’d have kept it proprietary?

This is the sort of thing that to me highlights the inherent inefficiency of proprietary software and processes.

“Oh sorry, you’ll need our magic hardware in order to run this software. It simply can’t happen any other way.”

Turns out that wasnt true which of course it isn’t.

Imagine instead of everyone could have been working together on a fully open graphics compute stack. Sure, optimize it for the hardware you sell, why not, but then it’s up to the “best” product instead of the one with the magic software juice.

but then it’s up to the “best” product

that’s the part why it didn’t happen

A serious question - when will nvidia stop selling their products and start asking for rent? Like 50 bucks a month is a 4070, your hardware can be a 4090 but thats a 100 a month. I give it a year

It’s more efficient to rent the same GPU to multiple people the same time, and Nvidia is already doing that with GeforceNow.

Cue the nvidia shills that find some reason still why amd is not objectively better.

There still is no support for ROCm on linux but this is still good to hear

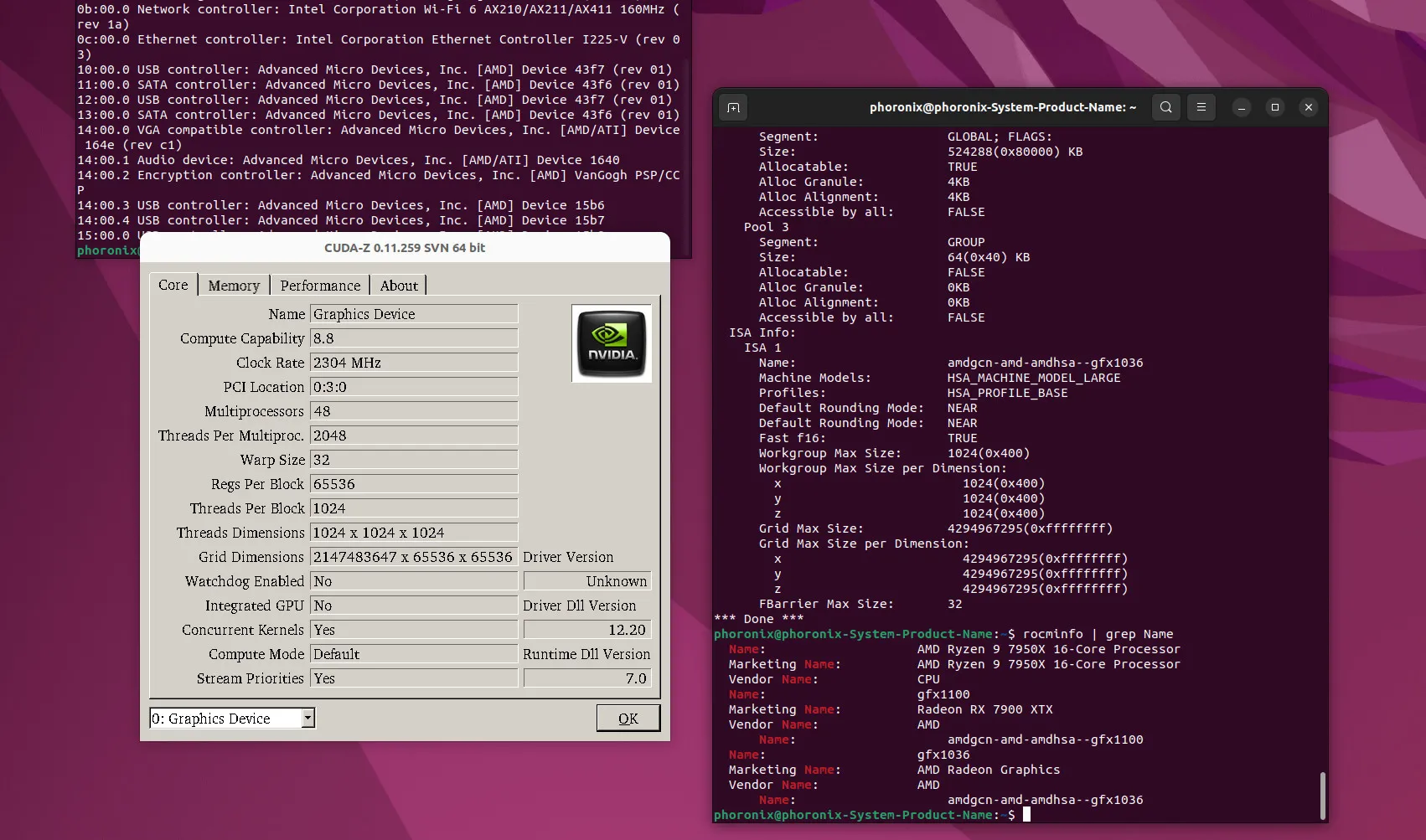

what do you mean? rocm does support linux and so does zluda.

https://rocm.docs.amd.com/projects/radeon/en/latest/docs/compatibility.html

https://rocblas.readthedocs.io/en/rocm-6.0.0/about/compatibility/linux-support.html

Yes on four consumer grade cards

If I want to have mid-range GPU with compute on linux my only option is nvidia.